3.3.1.1

The institution identifies expected outcomes, assesses the extent to which it achieves these outcomes, and provides evidence of improvement based on analysis of the results in each of the following areas: (Institutional effectiveness)

3.3.1.1. educational programs, to include student learning outcomes

Compliance Judgment

X In compliance Partially compliant Non-compliant

Narrative

As part of its core mission, Francis Marion University is committed to the continuous improvement of educational programs based on evidence from a systematic assessment of student learning. As part of this effort, each program at the University identifies expected student learning outcomes, assesses whether its students achieve those outcomes, and uses the results of those assessments to improve the programs.

Francis Marion University uses both internal and external reporting cycles to ensure the effectiveness of its programs and services. All academic degree programs and support offices of the University complete an annual assessment to evaluate their “success in meeting program goals and missions.” Each assessment report contains

- Program Mission Statement

- Program Learning Outcomes (PLOs)

- Executive Summary

- Student Learning Outcomes (SLOs)

- Assessment Methods

- Assessment Results

- Action Items (use of results)

Academic Planning Process

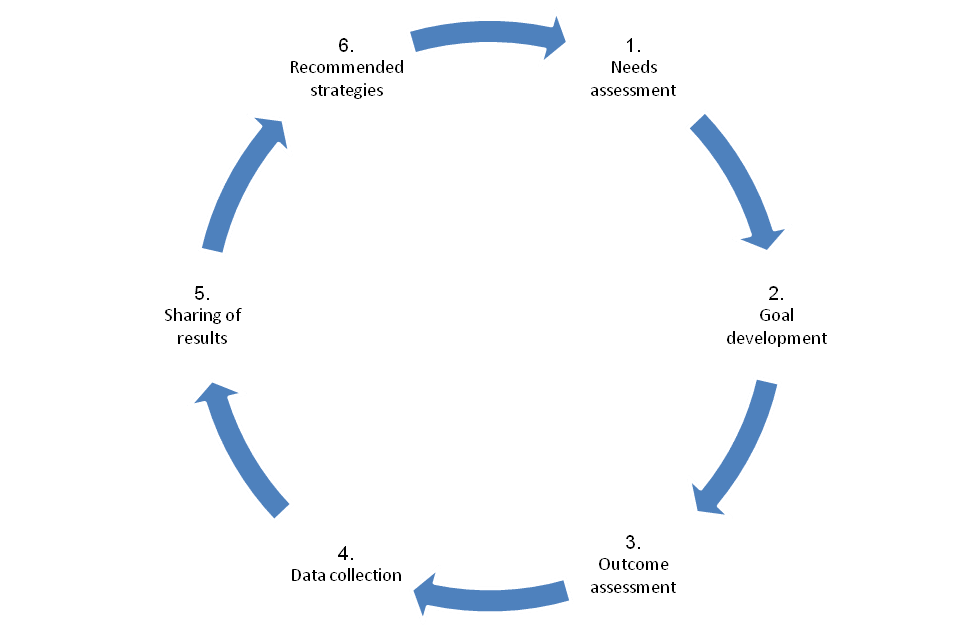

The processes for Program/Unit planning and institutional effectiveness include an evaluation by academic departments and unit staff persons driven by identified goals and missions. Figure 1 below provides a graphical representation of the cyclical planning and evaluation process.

Figure 1. Program/Unit planning and evaluation process

In the “Needs Assessment” phase, each department or program reviews the extent to which it is accomplishing its mission and develops preliminary plans for the upcoming year based on the action items identified in the previous year. There is also a review of the budget implications for any proposed change. In the “Goal Development” phase, each department or program finalizes its vision, goals, and goal indicators for upcoming year with assistance from the Office of Institutional Effectiveness. During this phase, unit and student learning outcomes are refined.

In the “Outcome Assessment” phase, assessments procedures are determined and the implementation period begins. A description of the methods and procedures are provided during this phase. In the “Data Collection” phase, information is gathered in order to evaluate the stated goals. In the “Sharing of Results” phase, preliminary outcome evaluations are collected. Determinations are made regarding the targets–were they achieved or not achieved. In the “Recommended Strategies Phase,” Institutional Effectiveness Reports are finalized, and action items are developed for the upcoming year.

At Francis Marion University, assessment of student learning to improve educational programs is part of the integrated process for planning, evaluation, and change implementation that permeates the institution. This system is described fully in Core Requirement 2.5.

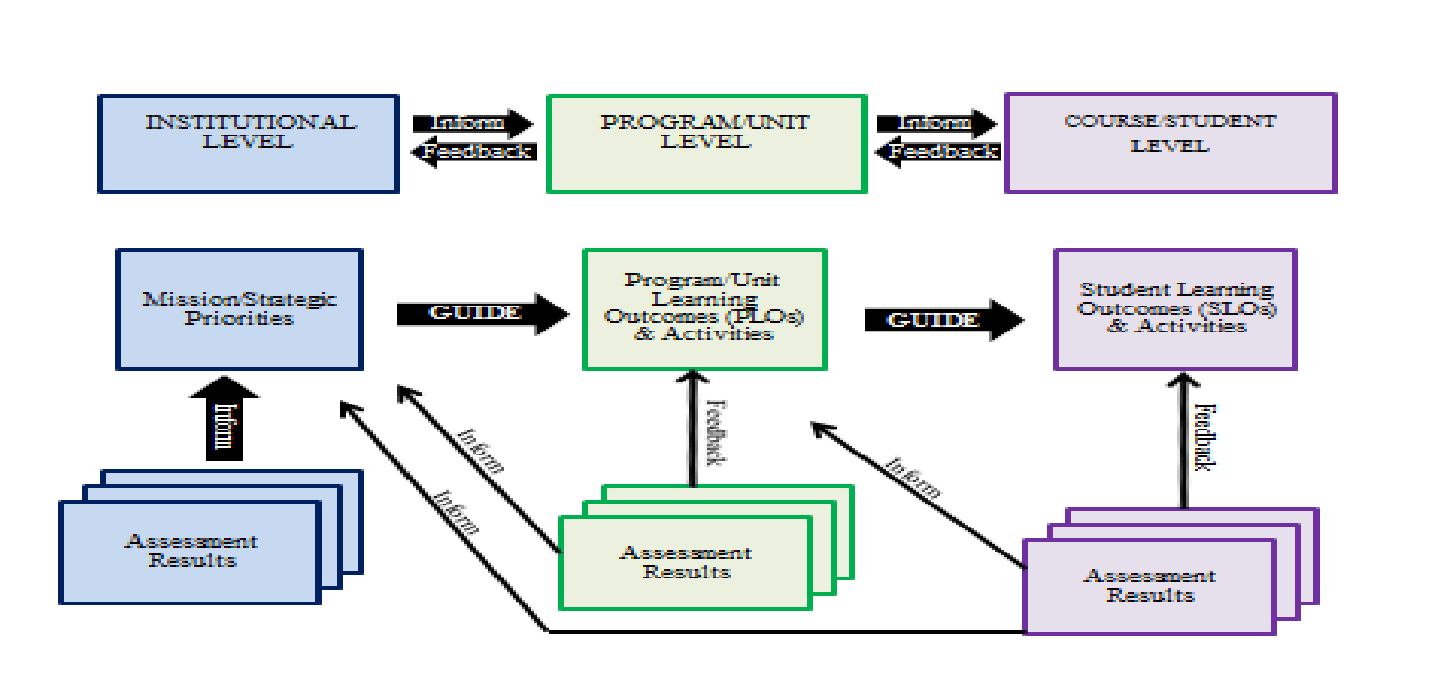

Figure 2 below presents a conceptual overview of the main elements of the assessment of educational programs, including the assessment of student learning, in the context of this integrated process.

Figure 2. Three Level Integrated Planning and Assessment Model

At the Institutional Level, the Francis Marion University Strategic Plan [1] is crucial for the success of the planning and evaluation process. Each year institutional priorities are reviewed, assessed, and modified as necessary. At the Program/Unit Level planning follows the Institutional Model; each Program Coordinator/Unit Director works with faculty/staff to develop a plan for their area that supports the institutional goals and objectives. At the Course/Student Level, the annual Institutional Effectiveness Report guides course-level planning.

The annual process for generating Institutional Effectiveness (IE) reports reflects the University’s commitment to faculty-initiated curricular changes. The Institutional Effectiveness reports are submitted for review to the Office of Institutional Effectiveness, and the university’s Institutional Effectiveness Committee (IEC) for compilation and summary in the annual Francis Marion University Institutional Effectiveness Report. The action plans in the Institutional Effectiveness reports illustrate the use of data to drive decisions and its documentation across the disciplines and reflect that the first mechanism to employ findings is within departments.

The Institutional Effectiveness Committee is composed of six faculty members elected from the general faculty for three-year terms as shown on the Faculty Governance website [2]. The committee recommends assessment instruments for the evaluation of academic and degree programs and support services.

Institutional Effectiveness Reports for all academic schools and departments are available for review [3]. In each report the academic unit presents the goals, assessment techniques, results, and actions initiated during the year. The institutional effectiveness activities of the departments and schools range from minor adjustments to the total revision of a program.

Examples of Student Learning Outcomes Assessment

As noted, each educational program at the University is responsible for identifying and assessing learning outcomes of its students. This includes documenting assessment activities in comprehensive annual reports.

The reports directly connected to Table 1, below, provide specific examples of the university’s student learning outcomes assessment activities. These reports are representative, including undergraduate, graduate, and professional programs’ assessment practices illustrating the breadth of educational programs offered at the University.

IE Reports 2016-2017 | IE Reports 2015-2016 |

|---|---|

| Biology Department [4] | Biology Department [19] |

| Business School -- BS in Computer Science [5] | Business School -- BS in Computer Science [20] |

| Business School -- Masters of Business Administration [6] | Business School -- Masters of Business Administration [21] |

| Chemistry Department [7] | Chemistry Department [22] |

| School of Education [8] | School of Education [23] |

| English Composition Program [9] | English Composition Program [24] |

| Fine Arts Department -- Visual Arts [10] | Fine Arts Department -- Visual Arts [25] |

| Mass Communication Department [11] | Mass Communication Department [26] |

| Mathematics Department [12] | Mathematics Department [27] |

| School of Nursing -- MSN-FNP Program [13] | School of Nursing -- MSN-FNP Program [28] |

| Political Science and Geography Department [14] | Political Science and Geography Department [29] |

| Physics and Astronomy Department [15] | Physics and Astronomy Department [30] |

| Psychology Department -- Bachelor of Science (B.S.) [16] | Psychology Department -- Bachelor of Science (B.S.) [31] |

| Psychology Department -- M.S. Applied Psychology & School Psychology [17] | Psychology Department -- M.S. Applied Psychology & School Psychology [32] |

| Sociology Department [18] | Sociology Department [33] |

Table 1. Sampling of 2016-2017 & 2015-2016 IE Reports

The faculty of the University, through its Institutional Effectiveness Committee, accepts the ongoing evaluation of the institutional effectiveness system as an important component of its responsibilities to the institution. The Institutional Effectiveness Committee pilot tested a report evaluation rubric in 2016-2017 [34] using it to review assigned programs [35]. In addition to sharing feedback with each program [36], a summary report was developed [37] and the information will be used to make the process more efficient next year.

The following pages provide concise specific examples of specific actions taken by programs to assess outcomes and evidence of improvement based on analysis of results. To address concerns identified in the evaluation of data from the 2015-2016 academic year, these selected programs developed the following action plan to be implemented during the 2016-2017 academic year.

Unit | Actions |

|---|---|

| Biology Department | Data collected during the fall of 2015 and the spring of 2016 using selected questions from a departmental common exit exam indicated an overall average of fifty-six percent (56%). The department set a target of 60%. Since the target was not achieved, the department made changes to improve Student Learning Outcomes. In order to improve performance in this area, the Biology Department has incorporated additional critical thinking practice in all classes across the curriculum. Based on data collected during the 2015-2016 academic year, the department decided to move beyond reliance on the common Biology exit exam to assess student learning outcomes germane to the identification of key concepts in the core areas and the application of the scientific approach. Starting in 2016-2017, to better assess the extent to which identified program modifications impact student learning, the Department has implemented a process that utilizes rubrics, case studies, selected items from examinations, and laboratory projects. |

| School of Business--Computer Science | The Computer Science faculty set as a goal for 2016-2017 that 100% of students would exceed the target, especially in the areas of oral and written communications and ethics. The Department of Computer Science developed the following action plan to be implemented during the 2016-2017 academic year: 1. Oral Communication Modify the junior year presentation in CS 340 such that the review and evaluation of the presentation is done by a panel reviewing a video-taped presentation. This experience and analysis will definitely provide more diverse feedback and consequently affect a positive change on the student’s senior capstone presentations at the Computer Science Symposium. 2. Written Communications In collaboration with professors in the English Department, modify English 318, Technical Writing, so that the course includes an emphasis of content organization and depth of discussion. 3. Ethics Modify the junior-year CS340 course by providing students with multiple examples of how to approach the discussions in the ACM/IEEE Software Engineering Code of Ethics module before beginning the assignment. Based on data from 2015-2016 (the presentation rubric) the CS faculty concluded that this pre-exposure will result in broader and deeper discussions. |

| School of Education | Based on the Praxis II data, the Department will keep an eye on the Middle Level ELA program. A mean below the passing score could just be a statistical anomaly. This will be monitored to determine if programmatic change is needed. Data from the Exit Interviews in 2015-2016 validated changes the Department made to the planning/assessment course as well as the 390 series last year. During the 2014-2015 academic year, the department collected data that suggested deficiencies in the EDU 390 Series in planning and assessment. The method of assessment was a “mini: Teacher Candidate Work Sample and the data suggested a weakness in writing measurable objectives as well as alignment among objectives and assessments. Based on this finding, the department made changes to bring about improvement in Student Learning Outcomes. The curriculum for the planning/assessment (EDUC 311) course was revised to include an emphasis on writing measurable objectives as well as aligning objectives, procedures, and assessments as a part of the planning process. In addition, the 390 series was revised to more closely duplicate student teaching projects. While no direct measures showed deficiencies with classroom management, the indirect measure of this variable from the exit interview revealed a need for improvement. Based on this finding, the department made changes in the area of classroom management. First, the instructional approach will be modified to include more relevant instruction related to classroom management as well as an effort to make instruction relevant to all levels (ECE, ELE, MLE, and Secondary). In addition to these pedagogical changes, the department also investigated moving the classroom management course into the instructional block preceding student teaching. However, this move is not feasible at this time because of scheduling issues. |

| Mass Communication Department | Based on data collected during the 2015-2016 academic year using a Departmental Posttest form, Mass Comm students performed, on average, at the 80% level when classifying salient aspects of current trends and issues in mass communication. Even though the target of 80% (minimum performance threshold) was achieved for this student learning outcome, the department decided to make changes to bring about improved student achievement. First, a new textbook has been adopted for Mass Comm 110. This new textbook will provide updated information to help student to understand and classify salient aspects of current trends and issues in mass communication. The new text will also provide supplemental digital content and a test bank. The department will use this test bank to develop more objective “direct measure” of student learning. Based on information provided by the Departmental Posttest form, the Mass Communications Department has developed a new Pre/Posttest process for AY 2016-2017. The Department will use the pre-test information to diagnose levels of understanding in classifying salient aspects of current trends and issues in mass communication in order to modify teaching and learning activities during the term and to improve measurement of student attainment at the post test. Based on data collected during the 2015-2016 academic year using a Departmental Posttest form, Mass Comm students performed, on average, at the 74% level when describing and identifying key issues germane to writing and editing for print, broadcast and public relations. The target of 80% was not achieved for this student learning outcome. Since the target was not achieved, the department decided to make changes to bring about improved student achievement. Based on a review of the data, Mass Comm faculty concluded that students needed more application opportunities in the curriculum. Therefore, students in Mass Comm 201, 221, & 301 will engage in Authentic Learning activities in AY 2016-2017. Instructors in these courses have developed activities for their students that match as nearly as possible the real-world tasks of mass communication professionals in the field. The tasks students are required to undertake are complex, ambiguous, and multifaceted in nature, requiring sustained investigation. The expectation is that this inquiry will draw on the existing talents and experiences of students, building their understanding of course content through participation. The department will also utilize a new pre-posttest process that will provide a more objective “direct measure” of student learning. |

| Political Science and Geography Department | Based on data collected during the 2015-2016 academic year using a Francis Marion University Political Science examination, Francis Marion University Political Science majors, on average, performed at the 57% level. Since our goal was 60%, this target was not achieved. Based on this finding, the department made changes in its curricula approach. First, the instructional approach will be modified to include direct treatment of the US Constitution and principles of federalism in all introductory political science courses (i.e., POL 101 and POL 103), and second, the creation of two questions asked on the first exam in all POL 101 and POL 103 sections to assess student understanding. In addition to these pedagogical changes, the Department of Political Science and Geography has created a new assessment method for assessing the extent to which students can describe and explain content areas in political science, specifically explaining and describing the United States Constitution and Federalist Papers. The department, through discussion and collaboration, reached the conclusion that relying on an examination to determine this competency was restrictive and did not provide an opportunity to fully understand what students knew, how they thought, or what they could do. We will pilot test this new approach during fall 2016 and deploy it fully in spring 2017. Based on data collected during the 2015-2016 academic year using a Francis Marion University Political Science examination, Francis Marion University Political Science majors, on average, performed at the 58% level. Since our benchmark was 60%, this target was not achieved. Based on this finding, the department made changes in its curricula approach. First, the instructional approach was modified by a complete re-drafting of the political science research methods (POL 295) syllabus to include 1. A new political analysis textbook for POL 295 that places a greater emphasis on statistical analysis, 2. The creation of four new problem sets as homework for the course that treat these concepts more directly, prior to the exam, and 3. The addition of days spent on statistical analysis training to the course syllabus. The department will continue to use the same benchmark exam question from the 2015-2016 year to determine whether the above curriculum changes improved student learning in this area. |

| Psychology Department--Graduate | Scores on the Praxis II Examination necessary for certification and licensure in school psychology were received for all seven students completing internships in the School Psychology Option. The seven program completers received scores on the Praxis II, which was revised and implemented this year. The mean score for these seven completers was 169.86 with individual scores ranging from 161 to 183. The required cut-score for certification of school psychologists in South Carolina and North Carolina has been set at 147. By these evaluative criteria, all graduates exceeded the examination requirements for certification in their anticipated states of practice. Graduates of the program have traditionally provided a 100% pass rate for the required certification and licensure examination, and this year’s graduates continue that tradition. This target was achieved. No Action required at this time. To assess our learning goal of communicating psychological concepts, the program assesses the evaluation reports that are provided to parents and schools. A 5 point rating rubric, ranging from 5 (Attends to all data/issues; Applies data in sophisticated manner; Sound conclusions/data-based recommendations) to 1 (Fails to attend to, consider, or address appropriate data and/or issues) is used. First year students are required to obtain ratings greater than 50% on all reports. Second year students must meet or exceed a criterion rating of 60% on all reports. Interns must meet or exceed a criterion of 70%. Results from these data indicate that each cohort met or exceeded the minimum criterion that was set. First year students averaged 59% on their reports; second year students averaged 69% on their reports; interns averaged 82% on their reports. There is an increase across each year and each portion of the report indicating that students are becoming more effective communicators of the psychological concepts that they are learning in their coursework. This target was achieved. |

| Sociology Department | Based on data collected during the 2015-2016 academic year using a pre-test/post-test of majors as a direct measure, it was determined that the target (70%) was not achieved for SLO 1.0. On the pre-test/post-test form, students performed on average at the 38% level on a 100 point scale. Since the target was not achieved for the identification and application of sociological imagination, the department made changes to bring about improvement in this student learning outcome. The curriculum for all Sociology courses beyond the introductory level has been revised to include an emphasis on the Sociological imagination. Course lectures have been revised to cover the definition of sociological imagination and its application to the substantive topics of the particular course being taught. The Department has also implemented a process to distinguish the sociological approach in Sociology from the approach of other disciplines (i.e. psychology, economics, political science, biology). The Department has also modified existing course projects for students requiring recognition of and an emphasis on the factors outside of the individual that influence people’s lives and how these influences are experienced. Based on data collected during the 2015-2016 academic year using a senior exit survey of graduating Sociology Majors, it was determined that the average rating of 4.56 did not meet or exceed the target of 5.0. On the exit exam, a benchmark of 78% was established for graduating sociology majors (baseline= 75%) that they would be able to identify and apply theoretical perspectives. In spring 2016, students performed on average at the 69% level on a 100 point scale on this assessment. Since our goal was 78%, our target was not achieved. Since the targets were not achieved for the ability to identify and apply different theoretical perspectives to societal issues, the department made changes to bring about improvement in this student learning outcome. First, the department has increased the level of emphasis on theory application across courses to address weaknesses in this SLO. In addition, students will be required to examine, explain, and evaluate theory with more specificity through course assignments and projects that require them to immerse themselves in the work of various theorists and theoretical perspectives and be able to apply those ideas to current social events. |

| Department of Nursing-- RN to BSN Option | Data collected in NRN 332 and NRN 445 requiring students to demonstrate understanding of topics by analyzing and discussing case scenarios in discussion boards indicated that 100% of students met or exceeded the 80% target for this outcome. The target of 80% was achieved. Data collected in NRN 449 which required students to identify, explain, and justify a health care need by creating a stakeholder letter and developing a quality improvement project, indicated that > 90% of students identified, explained, and justified a healthcare need after creating a stakeholder letter and developing a quality improvement project. The target of 80% was achieved. Data collected in NRN 333 where students demonstrated proficiency by performing a physical assessment check-off indicated that > 90% of students demonstrated proficiency in performing a physical assessment check-off. The target of 80% was achieved. Data collected NRN 448 where students demonstrated understanding of topics through explanation and analysis in discussion boards indicated that > 90% of students demonstrated understanding of topics through explanation and analysis in discussion boards. The target of 80% was achieved. |

Table 2. Sampling of Specific Actions Taken for Program Improvements.

Documentation

- Francis Marion University Strategic Plan

- IE Committee Membership 2016-2017

- Francis Marion University Website, IE Reports

- Biology Department IE Report 2016-2017

- Computer Science IE Report 2016-2017

- MBA IE Report 2016-2017

- Chemistry Department IE Report 2016-2017

- School of Education IE Report 2016-2017

- English Composition Program IE Report 2016-2017

- Visual Arts Program IE Report 2016-2017

- Mass Communication IE Report 2016-2017

- Mathematics IE Report 2016-2017

- School of Nursing MSN-FNP Program IE Report 2016-2017

- Political Science & Geography IE Report 2016-2017

- Physics IE Report 2016-2017

- Undergraduate Psychology IE Report 2016-2017

- Graduate Psychology IE Report 2016-2017

- Sociology Department IE Report 2016-2017

- Biology Department IE Report 2015-2016

- Computer Science IE Report 2015-2016

- MBA IE Report 2015-2016

- Chemistry Department IE Report 2015-2016

- School of Education IE Report 2015-2016

- English Composition Program IE Report 2015-2016

- Visual Arts Program IE Report 2015-2016

- Mass Communication IE Report 2015-2016

- Mathematics IE Report 2015-2016

- School of Nursing MSN-FNP Program IE Report 2015-2016

- Political Science & Geography IE Report 2015-2016

- Physics IE Report 2015-2016

- Undergraduate Psychology IE Report 2015-2016

- Graduate Psychology IE Report 2015-2016

- Sociology Department IE Report 2015-2016

- IE Report Rubric 2017

- IE Committee Report Review Assignments

- Sample Individual Program IE Report Feedback

- IE Committee Rubric Summary Report-Partial